Premium Only Content

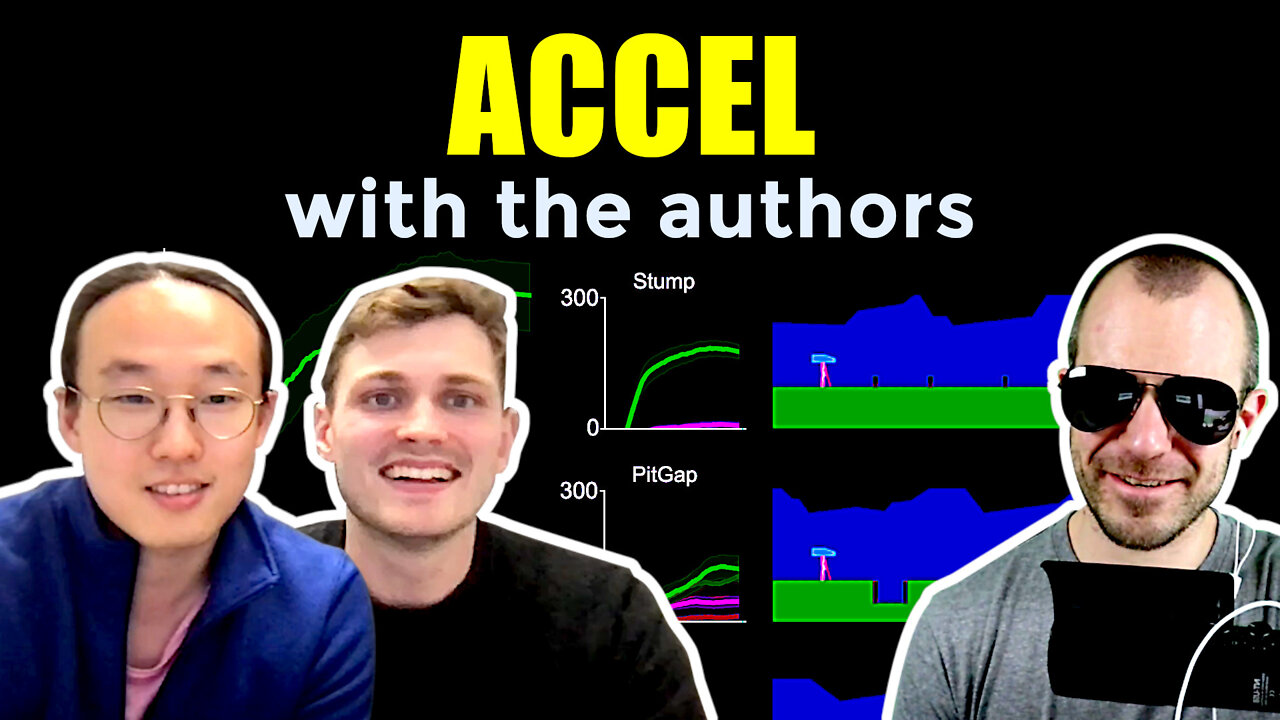

Author Interview - ACCEL: Evolving Curricula with Regret-Based Environment Design

#ai #accel #evolution

This is an interview with the authors Jack Parker-Holder and Minqi Jiang.

Original Paper Review Video: https://www.youtube.com/watch?v=povBD...

Automatic curriculum generation is one of the most promising avenues for Reinforcement Learning today. Multiple approaches have been proposed, each with their own set of advantages and drawbacks. This paper presents ACCEL, which takes the next step into the direction of constructing curricula for multi-capable agents. ACCEL combines the adversarial adaptiveness of regret-based sampling methods with the capabilities of level-editing, usually found in Evolutionary Methods.

OUTLINE:

0:00 - Intro

1:00 - Start of interview

4:45 - How did you get into this field?

8:10 - What is minimax regret?

11:45 - What levels does the regret objective select?

14:20 - Positive value loss (correcting my mistakes)

21:05 - Why is the teacher not learned?

24:45 - How much domain-specific knowledge is needed?

29:30 - What problems is this applicable to?

33:15 - Single agent vs population of agents

37:25 - Measuring and balancing level difficulty

40:35 - How does generalization emerge?

42:50 - Diving deeper into the experimental results

47:00 - What are the unsolved challenges in the field?

50:00 - Where do we go from here?

Website: https://accelagent.github.io

Paper: https://arxiv.org/abs/2203.01302

ICLR Workshop: https://sites.google.com/view/aloe2022

Book on topic: https://www.oreilly.com/radar/open-en...

Abstract:

It remains a significant challenge to train generally capable agents with reinforcement learning (RL). A promising avenue for improving the robustness of RL agents is through the use of curricula. One such class of methods frames environment design as a game between a student and a teacher, using regret-based objectives to produce environment instantiations (or levels) at the frontier of the student agent's capabilities. These methods benefit from their generality, with theoretical guarantees at equilibrium, yet they often struggle to find effective levels in challenging design spaces. By contrast, evolutionary approaches seek to incrementally alter environment complexity, resulting in potentially open-ended learning, but often rely on domain-specific heuristics and vast amounts of computational resources. In this paper we propose to harness the power of evolution in a principled, regret-based curriculum. Our approach, which we call Adversarially Compounding Complexity by Editing Levels (ACCEL), seeks to constantly produce levels at the frontier of an agent's capabilities, resulting in curricula that start simple but become increasingly complex. ACCEL maintains the theoretical benefits of prior regret-based methods, while providing significant empirical gains in a diverse set of environments. An interactive version of the paper is available at this http URL.

Authors: Jack Parker-Holder, Minqi Jiang, Michael Dennis, Mikayel Samvelyan, Jakob Foerster, Edward Grefenstette, Tim Rocktäschel

Links:

TabNine Code Completion (Referral): http://bit.ly/tabnine-yannick

YouTube: https://www.youtube.com/c/yannickilcher

Twitter: https://twitter.com/ykilcher

Discord: https://discord.gg/4H8xxDF

BitChute: https://www.bitchute.com/channel/yann...

LinkedIn: https://www.linkedin.com/in/ykilcher

BiliBili: https://space.bilibili.com/2017636191

If you want to support me, the best thing to do is to share out the content :)

If you want to support me financially (completely optional and voluntary, but a lot of people have asked for this):

SubscribeStar: https://www.subscribestar.com/yannick...

Patreon: https://www.patreon.com/yannickilcher

Bitcoin (BTC): bc1q49lsw3q325tr58ygf8sudx2dqfguclvngvy2cq

Ethereum (ETH): 0x7ad3513E3B8f66799f507Aa7874b1B0eBC7F85e2

Litecoin (LTC): LQW2TRyKYetVC8WjFkhpPhtpbDM4Vw7r9m

Monero (XMR): 4ACL8AGrEo5hAir8A9CeVrW8pEauWvnp1WnSDZxW7tziCDLhZAGsgzhRQABDnFy8yuM9fWJDviJPHKRjV4FWt19CJZN9D4n

-

34:27

34:27

The Connect: With Johnny Mitchell

13 hours ago $7.41 earnedCan He Stop Them? Inside Trumps War On Mexican Drug Cartels & The New Era Of Mexican Organized Crime

17.4K2 -

2:33:15

2:33:15

Tundra Tactical

5 hours ago $5.97 earnedLuis Valdes Of GOA Joins The Worlds Okayest Firearms Live Stream!!!

19.2K -

1:03:41

1:03:41

Man in America

14 hours agoAre Trump & Musk the COUNTER-ELITES? w/ Derrick Broze

53K30 -

3:45:08

3:45:08

DLDAfterDark

5 hours ago $7.62 earnedDLD Live! SHTF Handguns! Which Would You Choose?

29.6K2 -

1:50:38

1:50:38

Mally_Mouse

7 hours agoSaturday Shenanigans!! - Let's Play: Mario Party Jamboree

40.5K -

1:13:00

1:13:00

Patriots With Grit

11 hours agoWill Americans Rise Up? | Jeff Calhoun

32.9K10 -

14:55

14:55

Exploring With Nug

11 hours ago $10.50 earnedWe Found Semi Truck Containers While Searching for Missing Man!

50.7K7 -

27:57

27:57

MYLUNCHBREAK CHANNEL PAGE

19 hours agoOff Limits to the Public - Pt 3

108K62 -

38:07

38:07

Michael Franzese

12 hours agoLeaving Organized Crime and Uncovering Mob in Politics: Tudor Dixon and Michael Franzese

91.6K15 -

2:42:54

2:42:54

Jewels Jones Live ®

2 days agoAMERICA IS BACK | A Political Rendezvous - Ep. 111

73.1K48